| Pros | Cons |

|---|

| More scientific value? | Reviewers entirely missing the point of the paper less likely to be selected by authors | Authors may avoid some more critical reviewers (that seem to be less able to write a convincing positive review) |

|---|

| Meaningful interaction? | Reviewers receive author answers (to questions posed at positive review stage) before being asked for a decision | Dismissive reviewers may ask leading questions to influence others (mitigated by author responses) |

|---|

| Respectful? | Little bit of power given to authors (that are also experts deserving respect!) | Some wasted effort of reviewers not selected by authors |

|---|

| Considers human nature? | Some negative instincts may be inhibited if only a positive review is requested at first | Some reviewers may try to trick authors by making up positives (easy to spot?) |

|---|

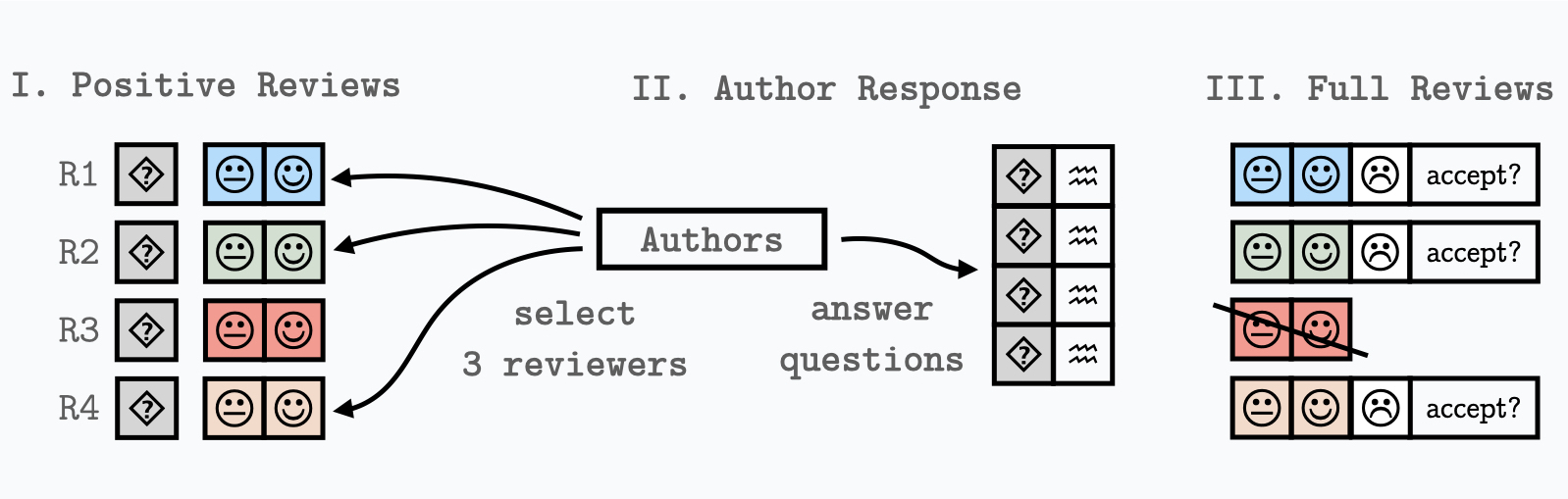

| Implementable? | Can be implemented in CMTs [1,2,3] as rebuttal to positive reviews | No rebuttal to full reviews (which is perhaps in any case too late) |

|---|

| Feasible? | Positive reviews without a decision are easier to write? | Some reviewers may refuse to write a purely positive review without a decision? |

|---|

| Emotionally sustainable? | Hope for authors with bad experiences? | Unconventional and potentially confusing for reviewers |

|---|

| Prevents accidental bidding? | More clarity on targeted progress metrics through explicit description of targeted audience | Authors/reviewers may be confused by audience descriptions (that are only visible during reviewing process) |

|---|

Some additional questions and answers: